Financial certifications: Is AI the beginning of the end?

If a certification signifies expertise via answering a set of multiple choice questions (MCQs), but an LLM does that *way* better, what is the purpose of a certification?

In late 2019, the credentialing space was apparently "disrupted" by the introduction of the Financial Data Professional (FDP) program. At the time, I briefly considered studying the FDP syllabus, but I perceived better options to develop my data science skills. Notably, the program delegated code prerequisites (either R or python) to datacamp, where I had already ventured beyond their assigned code introductions. But mostly I just felt that answering exam questions was an inferior, if not silly, method in service of mastering data science.

Less than five years later, AI has disrupted the FDP. The FDP Institute has paused the exam at least through 2024, explaining (emphasis mine):

"With a near revolution in AI and machine learning and their implications for the financial industry, it is the right time to be taking a full inventory of our curriculum readings and learning objectives, as the relevancy of our curriculum must never be compromised.

After the April 2024 exam session closes, the administration of exams will pause while this review is taking place …Now is the time to evaluate and self-test not only coverage of some newer tools such as generative AI, but to understand what this also means around ethics and a fiduciary duty which is being tested and stretched in new ways, in real time." — Important Update to FDP Program, 12/2023

Come again? The FDP is supposed to be ahead of the curve! I had two immediate reactions:

Existing FPD Charterholders are likely miffed. After all, they paid $1,350 for the obsolete—er prior—curriculum. That would be the curriculum developed before GPT-3 was released. Oops, sorry!

Doesn't the act itself—the requirement to pause and the inability to update the curriculum in real-time—implicate the whole concept?

I guess you could argue the institute is innovating to acknowledge the moment (I feel obligated to stretch myself with a counter). I guess.

Out of curiosity, I asked GPT-4 to summarize the evolution of the curriculum from 2019 to 2024. With respect to structure and content,

"2019: The curriculum emphasized a broad introduction to data science, big data, and machine learning, including regression, classification, clustering, and ethical issues.

2024: The latest guide places a stronger emphasis on advanced topics, including neural networks, reinforcement learning, textual analysis with large language models (e.g., ChatGPT), and fintech applications. It maintains a focus on ethics, privacy, and regulation, integrating these considerations throughout the curriculum." — Source: GPT-4’s comparative analysis

That sounds like smart evolution. And the FDP’s learning objectives (LO) are impressive. If I knew all of their LOs—which is not nearly the case—I'd know a lot of hard stuff that matters.

Isn't the deeper problem more obvious? The valuable skills sought by the FPD candidate may not be conducive to the traditional multiple-choice exam; aka, item response theory (IRT). Yes, I do realize the point of a certification exam is not directly to train candidates in test taking; the exam is supposed to assess the learner's mastery of the underlying material. But that is not how many (most?) people participate in these commercial programs! These certification exams are expensive career taxes. The customers (aka, learners) seek first to pass the exam, and the single best way to do that is to answer as many practice questions as possible; aka, to revise for the exam.

Isn’t the safe hypothesis simply that AI disrupts item response theory (IRT) itself?

Tim Dasey writes that "AI is gobbling expertise—the core purpose of conventional schooling … Schools are expertise factories, but we need wisdom factories."1 The traditional certification exam is geared toward the transmission of expertise. It is not geared toward wisdom. Is it geared toward skill acquisition? Tough question.

I pulled up the FDP’s latest set of sample questions. Here are the first two:

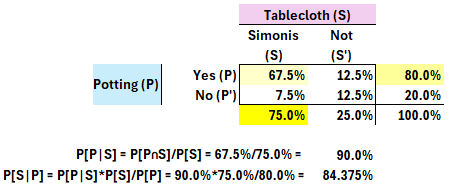

"1. Frank plays pool on Simonis tablecloth 75% of the time. His overall potting success rate is 80%, which increases to 90% when he plays on Simonis tablecloth. What is the probability that Frank is playing on Simonis tablecloth if he has successfully potted a ball?"

This is a classic financial exam question. It’s a good sample because we all need to know how to answer this. (Or, so I thought three years ago!). We need to apply Bayes Theorem. It’s a trivial problem for GPT. Myself, I like to render the probability matrix2.

"2. In the k-nearest neighbors approach to classification, what is the impact of increasing k on the decision boundary?"

This is a tricky question. Personally, I hate it because it’s lazy. Although I’ve written a tutorial on k-nearest neighbors (k-NN), I admit that I wasn’t sure of the correct answer here because I got caught up in whether it refers to a 2- or n-dimensional space, an unstated assumption. I see this a lot in these exams: there is often a textbook-correct answer that ignores complications raised precisely by candidates who “know too much”.

The question is: are these MCQs good (or important or useful) evaluators of skill? If not, could they be better? Or, is testing for expertise to some degree obsolete. If I can copy/paste the text as an LLM prompt (which I did), and the LLM generates a perfect and perfectly informative response, what about Bayes Theorem exactly do I need to know? I don’t know the answer to this last question. Knowing nothing is not satisfying; e.g., I do need to know the difference between joint and conditional probabilities.

Of course, I don’t know if generative AIs will render financial certifications totally obsolete. But I do believe it’s the beginning of end of the traditional exam format. Testing for comprehension—or even expertise—is insufficient when careers demand our proficiency as centaurs/cyborgs who can delegate (at least) some of the knowledge acquisition and synthesis. Knowing stuff (aka, being an expert) was never quite sufficient in itself, but it often carried you a long way and boosted your professional signal; and itself sometimes signified mastery and capability. Today anybody can summon the expertise, albeit imperfectly. Ergo, testing for comprehensive via the multiple choice question—even if very well-designed—does not meet the mission.

Here’s my probability matrix for this question. I colored the given (inputs) assumptions in yellow (first 75% in brightest yellow, then 80%, then 67.5% = 90%*75% in lightest yellow). Once these three cells are filled, the remainder are easy to populate and we can answer any conditional probability question.