Can GPT-4 analyze DELL's balance sheet from its 10K? Yes, if coached.

I uploaded DELL's balance sheet from the 10-K without clean. GPT-4 is a good analyst if coached (e.g., WC, leverage) and it nailed the cash conversion cycle (CCC).

I started this substack to informally share experiments as I practice and explore applications of LLMs to finance1. For one project, I’d like to see if I can build an AI equity analyst. Today’s humble start to that project: a brief test of GPT-4’s ability to process financial statements from the 10-K.

As a happy shareholder of Dell Technologies (my DELL position is +193% over my cost basis of $40.35), I uploaded its three key financial statements.

Here is my conversation with GPT-4, before I realized that I wasn’t even using Openai’s Data Analyst GPT. I’m impressed, who wouldn’t be.

Some observations:

Files: The upload is a direct share of the tables in the 10-Ks; i.e., imperfect but fairly structured data in an XLS format that required zero cleaning by me2

Working capital: By my check, it nails the working capital question (“This indicates a decrease in working capital of −$3,244−$3,244 million from 2023 to 2024.”)

Leverage ratios: Because Dell has negative book equity, it needed help calculating meaningful leverage ratios

For invested capital, it needed helps but it did find one answer (“Using the alternative definition for invested capital: Invested Capital = Total Assets − NIBCLs = $82,089 million − $41,512 million = $40,577 million Invested Capital = Total Assets − NIBCLs = $82,089 million − $41,512 million = $40,577million”)

As many times before, GPT-4 will not necessarily reveal problems with your assumptions (or mistakes in your own reasoning) but it’s remarkably good when you follow-up with with a question about the drawbacks/weaknesses of your own query! In this case,

After it responded to my question about solvency ratios, I followed up with “In the solvency ratios, we are using the balance sheet's recorded equity (aka, book equity). Is there any drawback to this, and if so, what alternative do we have to improve the solvency measures?” I have been trained (a long long time ago) to replace book equity with market equity …

… and this is exactly what I was looking for among GPT-4’s replies “Market Value of Equity: Instead of using book equity, use the market value of equity (i.e., market capitalization for publicly traded companies). This incorporates the market’s current view and expectations about the company's future earnings and risk, offering a potentially more realistic measure of equity.”

Cash conversion cycle (CCC): Bam! Yes, -46 days does look correct to me. Sure, I needed to add a nudge but the initial prompt was just “What additional information do you need to compute Dell's cash conversion cycle (CCC)?” Amazeballs.

I went to create charts and belatedly—at that moment in the informal conversation—realized that I had forgotten to invoke GPT-4’s Data Analyst. Oops. None of the above natively invoked Data Analyst.

I then switched over to Data Analyst, I cannot seem to share the conversation with Data Analyst3 (I’m not alone apparently) so I’ll just summarize the subsequent, new conversation with Data Analyst:

Data Analyst expertly calculated the CCC of -46 days—pulling from two different statements—and without almost no help from me (except my need to remind it that “I uploaded the balance sheet and the income statement, so you should be able to retrieve these compenents [sic].” Interesting)

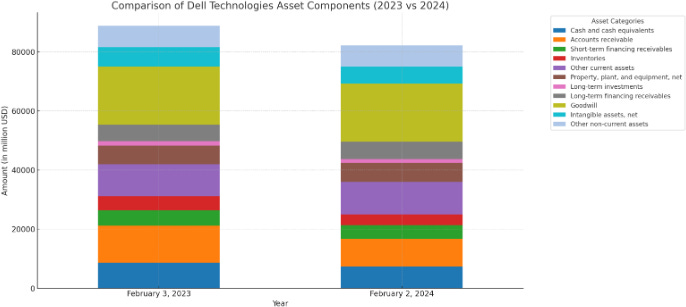

It correctly visualized the assets (see below)

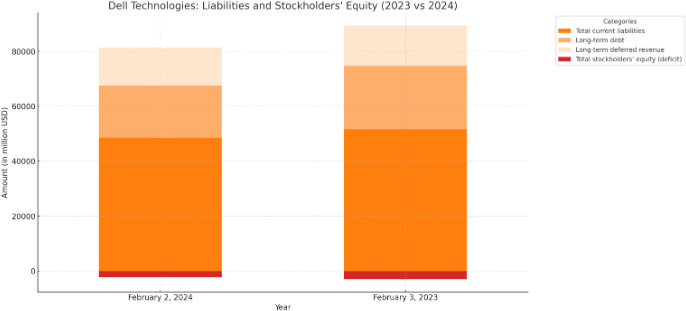

With some help, it also visualized the right-hand side of the B/S (see below), including suggesting a color scheme (see below)

My summary experience is that GPT-4 is a super fast, brilliant analyst who is very sensitive to variations in my prompts. “He” or “she” (the LLM as analyst) is clearly 100x or 10,000x faster than me. This speed factor, I am convinced, is decisive with disruptive implications. Like co-pilot coding, there is a back-and-forth and much of it implicates my own imprecision. For example, I added some reconciling calculations to the XLS, but those annotations rightly confused the LLM. When I re-uploaded the clean file, it found the accounts without a glitch (we’re writing natural language code here, so we will write naturally write buggy prompts). It’s arithmetic was generally solid, as you can see.

My other substack, fintechie, aspires to a bit more polish when it comes to finance and fintech. This substack is more like a personal notebook that I barely edit.

XLS that confirms calcs TBD here

The error message (“Failed to copy link to clipboard - could not create link”) apparently is the common response when sharing Data Analyst threads, at the moment.